Death by A.I.

New "Autonomous Warfare Center" will automate targeted killings

The U.S. military’s secretive Special Operations Command plans to establish its first-ever center for AI-driven missions like targeted assassinations.

Autonomous warfare is all the rage at the Pentagon, where computers and artificial intelligence process intelligence data, select targets and then transmit kill orders to a waiting robot, or a “loitering” missile or airplane.

The new “Special Operations Autonomous Warfare Center” is referenced in the $1.5 trillion Department of War budget request to Congress this week.

Special Operations Forces refers to commando units like Navy SEAL Team, Army Green Berets, Marine “Raiders” and others who support “unconventional” warfare, and since 9/11, targeted killing. SEAL Team 6 and Delta Force of the Army are two of the most infamous of the secret units, and have been central to capture and decapitation operations like those in Venezuela and Iran.

One can say a lot of things about the rapid and chaotic adoption of artificial intelligence in the American military. But in this context, the term “autonomous warfare” is a euphemism for automated killing. (Autonomous intrinsically means acting independently, governing internally, or operating without external control.)

An unusually frank description of the role AI will play in the future comes from former Joint Special Operations Command chief Gen. Stanley McChrystal (ret.), who compared AI to “infant Hercules,” invoking the Greek god’s own role as a killer even in infancy.

McChrystal says:

“And like the infant Hercules who strangled two snakes sent by Hera to kill him in his crib, AI will grow to be strong — and a part of almost everything we do going forward.”

What McChrystal doesn’t mention is what happened when Hercules grew up. He spent his life carrying out killings on the orders of Eurystheus — a weak, cowardly king who hid in a jar and never had to understand anything. Hercules’s overwhelming power substituted for strategy.

In the most famous of his myths, Hercules fought the Hydra, a monster that grew two heads for every one he cut off. He was strong enough to keep swinging, but sheer power alone only multiplied the problem. He had to change his approach entirely to win. It’s a useful parable for a military that has spent two decades perfecting decapitation strikes only to watch the threats multiply.

Welcome to the era of CombatGPT. The Pentagon has since 2022 used AI in quarterly exercises for “target detection” involving personnel from all six military service branches. The computers pull together the ocean of information pieces that are collected every day — every minute — and extract the most important, according to the AI program, aggregating and geolocating the blips and dots into a potential target.

During the most recent Iran War, Middle East commander Adm. Brad Cooper felt it necessary to give assurances that the use of AI still includes a “human-in-the-loop” to make decisions. “Humans will always make final decisions on what to shoot and what not to shoot, and when to shoot,” he said. That statement, of course, contradicts the very idea of “autonomous.”

Cooper’s assurance echoes a long line of similar promises. The Air Force said the same thing about drones before the unmanned kill chain was compressed to the point where the “decision” became a rubber stamp. The pattern is consistent: a new capability is introduced with human-in-the-loop safeguards, the speed and scale of operations make those safeguards a bottleneck, and the bottleneck becomes the “Agree to terms” button on your computer most people click without thinking.

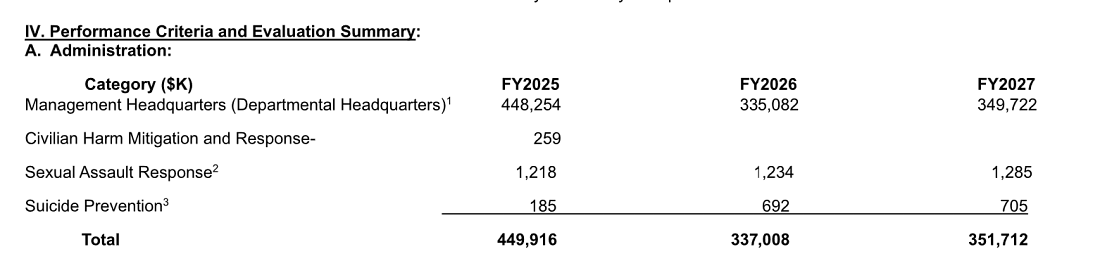

(Note that the FY 2027 budget request also zeroes out funding for Pentagon work in civilian harm “mitigation,” eliminating the element that might also be able to “autonomously” warn of direct civilian casualties and damage.)

The budget document doesn’t say what the Autonomous Warfare Center’s initial budget will be, but it doesn’t need to be large. The infrastructure already exists. Two decades of decapitation strikes have produced the targeting architecture, the intelligence pipelines, the kill chains. What AI does is remove the last friction — the human time spent correlating down to attacking a target.

Much of what the Special Operations Autonomous Warfare Center will be working with is already there. Both in Ukraine and in Iran, the military sees its challenge as dealing with “swarms” of low-cost enemy weapons, from one-way attack drones to relatively rudimentary ballistic missiles.

The Miami-based Southern Command, which is responsible for the shooting part of the drug war, has also established its own autonomous command to automate the tracking and killing of drug shipments where the speedboats and mini-submarines serve as the low-cost targets.

Nobody in Congress has so far asked about the creation of “autonomous” killing commands. The budget line item will likely pass without a hearing, buried in a special operations (and mostly classified) budget that receives less oversight than virtually any other part of the Pentagon. By the time the public learns what the Autonomous Warfare Center actually does, it will have been doing it for years.

McChrystal is right that AI will be “a part of almost everything we do going forward.” What he left out — what the myth he invoked actually teaches — is that the strongest weapon in the world is only as good as the mind wielding it. Hercules eventually learned that.

Will the Pentagon?

No comments:

Post a Comment